Nobel Laureate Voices Concerns Against The Very Tech He Helped Create

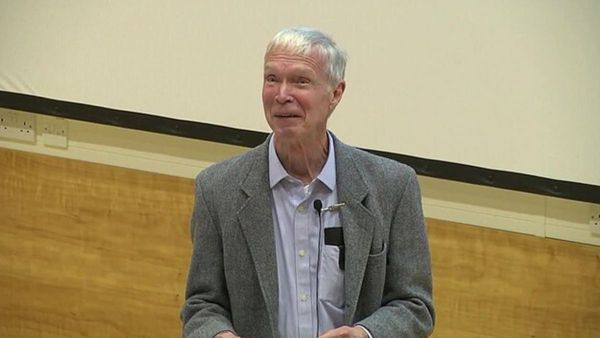

The recent Nobel laureate in physics for 2024, John Hopfield, has expressed his concerns over the rapid advancements in artificial intelligence (AI), labeling them as "very unnerving." His cautionary remarks were made during a video conference from Britain, aimed at an audience at a New Jersey university. Hopfield, a professor emeritus at Princeton, along with his co-winner Geoffrey Hinton, emphasized the critical need for a deeper comprehension of AI's inner mechanisms. This understanding is essential to avert the potential dangers of these technologies spiraling beyond human control.

Hopfield and Hinton, both pioneers in the field of AI, have made significant contributions that laid the groundwork for modern AI applications. Hopfield introduced the "Hopfield network," a model that illustrated how artificial neural networks could replicate the biological processes of memory storage and retrieval. Hinton, known as the "Godfather of AI," further advanced this concept with his "Boltzmann machine," bringing in an element of randomness that has been crucial for the development of applications such as image generators. Their work not only earned them the prestigious Nobel Prize but also positioned them as leading voices in the ongoing dialogue about AI's future.

During his address, Hopfield recalled the emergence of two potent technologies in his lifetime—biological engineering and nuclear physics—both of which carry benefits and risks. He highlighted his unease with any technology that lacks clear controls or a deep understanding of its limitations. This sentiment underscores the gravity of his concerns regarding AI's unchecked progress and the potential consequences of its misuse or mismanagement.

The realm of AI has witnessed a remarkable acceleration, igniting a competitive race among corporations to capitalize on its potential. This rapid evolution has sparked a debate over the technology's trajectory and the capability of scientists to fully grasp its implications. Hopfield used the allegory of "ice-nine" from Kurt Vonnegut's novel "Cat's Cradle" to illustrate the unforeseen dangers of advanced technologies. This fictional substance, while invented with good intentions, ultimately leads to catastrophic environmental destruction, serving as a metaphor for the unpredictable and potentially perilous path of AI development.

Amidst these advancements, Hinton has emerged as a vocal critic of AI's unchecked growth, cautioning that more intelligent entities might eventually surpass human control. This fear resonates with his statement to reporters during a University of Toronto news conference, where he serves as professor emeritus. He stressed the scarcity of instances where less intelligent beings successfully manage those of superior intellect, suggesting a bleak outlook should AI surpass human intelligence.

To mitigate these risks, Hinton advocates for a concerted effort in AI safety research. He urged that the brightest young minds should focus on this area, and called on governments to compel major companies to provide the necessary computational resources for this vital work. His call to action reflects a pressing need to understand and potentially curb AI's capabilities before they evolve beyond human oversight or control.

The warnings issued by Hopfield and Hinton highlight the dual-edged nature of AI—a field brimming with potential but fraught with risks. Their calls for a deeper understanding of AI systems, coupled with a strategic focus on safety research, underscore the urgency of addressing these challenges head-on. As pioneers in the field, their insights serve as a crucial reminder of the responsibilities that come with technological advancement and the need for careful stewardship of AI's future development.