SpiNNcloud Systems Launches First Commercial Neuromorphic Supercomputer, SpiNNaker2

Today marks a significant milestone in the evolution of artificial intelligence and supercomputing technologies, as SpiNNcloud Systems unveils the SpiNNaker2 platform. This announcement, made in anticipation of ISC High Performance 2024, introduces a supercomputer-level hybrid AI high-performance computing system inspired by the human brain's operational principles. Steve Furber, the mind behind the original ARM and SpiNNaker1 architectures, leads the development of this innovative platform. The SpiNNaker2 is engineered to handle AI and various workloads efficiently using a multitude of low-power processors.

The first-generation SpiNNaker1 architecture has found its application across 23 countries, utilized by numerous research groups. With the advent of SpiNNaker2, prestigious institutions like Sandia National Laboratories, Technical University of München, and Universität Göttingen have already placed their orders. This next-generation platform emerges from IP developed within the Human Brain Project, a billion-euro initiative by the European Union aimed at crafting intelligent and efficient artificial systems.

Fred Rothganger from Sandia National Labs praised SpiNNaker2's capabilities, highlighting its programmable dynamics, event-based communication, and scalability. "SpiNNaker2 is the most flexible neural supercomputer architecture available today," Rothganger stated, expressing enthusiasm for developing applications on this platform.

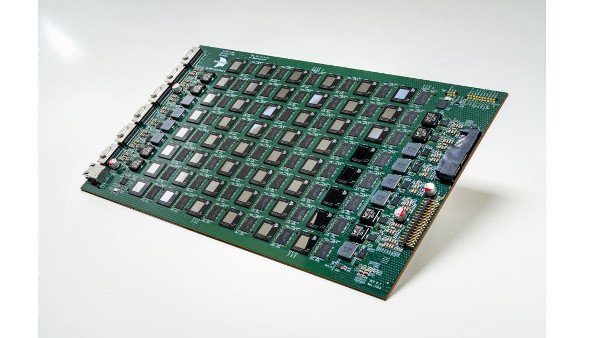

The technical prowess of SpiNNaker2 is evident in its specifications. The system features a SpiNNcloud server board equipped with 48 SpiNNaker2 chips, each containing a mesh of 152 Arm-based cores and various accelerators to enhance neuromorphic, hybrid, and mainstream AI model computations. Its design allows for scaling up through multi-rack setups to support at least 10 billion interconnected neurons firing in real-time. This pre-exascale system can deliver up to 0.3 exaops, positioning it as a formidable solution for Machine Learning workloads.

Unlike GPU-based solutions, SpiNNaker2's architecture employs asynchronous low-power units for tackling workloads. This approach ensures versatility, energy-efficient operation, effective communication, and reduced operational costs. SpiNNcloud is pioneering in making this neuromorphic supercomputing architecture widely accessible at unprecedented scales.

SpiNNaker2 transcends traditional neuromorphic systems by incorporating hybrid AI acceleration. This feature is pivotal for developing systems capable of understanding their context and environment—a characteristic DARPA associates with the third wave of AI. These systems are designed to build explanatory models over time, enhancing their ability to characterize real-world phenomena.

Hector Gonzalez, co-founder and co-CEO at SpiNNcloud Systems, shared his vision for the future of AI through brain-inspired technology. "We’re building the most advanced brain-like supercomputing platform on the market," Gonzalez stated. This ambition positions SpiNNcloud as a leader in hybrid AI HPC, driving the development of reliable and efficient hybrid AI systems for defense, drug discovery, quantum emulation, smart city applications, and more.

The cloud service offered by SpiNNcloud will be available in the second half of 2024, with full production systems expected to ship in the first half of 2025. For those interested in early access or preordering the SpiNNaker2 system, information is available on SpiNNcloud's website. Attendees of ISC High Performance 2024 can also visit SpiNNcloud’s booth L19 for a closer look at this revolutionary technology.